The

rereading Stephen King project continues.

Different Seasons (1982)

A collection of four novellas, three of which have been made into movies (supposedly the fourth is

in the works), two of them (“Rita Hayworth and

The Shawshank Redemption” and “The Body” aka

Stand By Me) getting Oscar nods (

Apt Pupil got mixed reviews). These stories don’t really have a horror or supernatural component, and are more character studies than anything. The most successful is “The Body,” with four slightly pre-teen friends circa 1960 setting off on a long trek to see a dead body. The relationships between the characters develop over the course of the journey, and the reaction isn’t what they anticipated. King doesn’t always get credit for his characters, as the scare factor is usually what everyone focuses on, but he really nails the mindset of that age extremely well. “Apt Pupil,” about a teenager’s unhealthy obsession with a former Nazi—and the hold they develop over each other—doesn’t quite do it for me; I can see why Dussander (the Nazi) would be afraid of Todd (the kid) blowing his cover, but why would the kid be afraid of Dussander threatening to reveal that Todd never turned him in? And why do they both start randomly killing winos? (A little is made of the fact that Dussander never speaks Todd’s name. The reason is not given in the story, and it was only a little bit later that I realized that

Tod is the German word for death.

The “twist” ending to “Rita Hayworth,” which you can kind of see coming (even given that I read these stories back in the early 1980s), does strain credulity just a tad, but it’s still a good story.

“The Breathing Method” was the only one of the quartet that I had no recollection of (and was not made into a movie...yet) and the tone and characters are a 180 degrees from “The Body” (middle-aged New Yorker listening to a story by an elderly doctor about a patient he had in the 1930s). The “twist” ending to this twice-told tale make me wonder how on Earth I had forgotten it, as it is quite bizarre. Oddly, it works.

Anyway, this is a pretty strong collection of non-horror stories that are just as compelling as the creepy stories.

Grade: A-

Christine (1983)

I was really girding my loins for this one, thinking (perhaps recollecting) that it was King almost literally jumping the shark. And yet, it turned out that I actually rather liked it. In a way, it's kind of like

The Shining on wheels in that (well, like a lot of horror) it is about forces of evil tapping into an individual’s inner demons. In the case of Jack Torrance, it was being a short-tempered alcoholic. In the case of Christine’s Arnie Cunningham, it is being a pimply high school misfit. Christine isn’t

really about a possessed car; it’s really about high school, and King taps into that mindset as easily as he tapped into the pre-teen mindset in “The Body.” Christine is very much a set of character studies—Arnie Cunningham, his only friend the football player Dennis Guilder, and his would-be girlfriend Leigh.

What I guess doesn’t entirely work—or at least the question I have—is exactly what was possessed. What I mean is, Arnie buys the car from a bitter, perpetually angry old Army vet named Roland LeBay. The car is deteriorating on LeBay’s lawn and he is selling it. Arnie sees it and, as a car aficionado, immediately falls in love with it and wants to restore it. He buys it from LeBay, and the car actually starts restoring itself. Then LeBay suddenly dies, and every time Christine starts driving in by itself—and killing Arnie’s enemies—it turns out she is being driven by the ghost of LeBay. But...what was driving her before LeBay died? Meanwhile, Arnie starts psychologically (and in some ways physically) turning into LeBay. Why, if his ghost is still tooling around in the car? And no mention is made of how the car got evil, or was it that LeBay made it evil? I think it would have worked better if it had been a straightforward

Shining-like possession without the added complication of LeBay. There is also a scene in which a character is killed by the car inside his own house; the car crashes into the living room and chases him around and up a staircase. Yeah, that was a bit much (it reminded me of a scene from Woody Allen’s

Take the Money and Run).

What is also a bit distracting is that the first third of the book is written in the first person by Arnie’s friend Dennis. At about the one-third mark, Dennis is seriously injured in a football game and is confined to the hospital for a few months. The narration then shifts to third-person (in order, it’s obvious, to describe events that there is no way Dennis would be able to witness, even if he wasn’t in hospital), but then at the two-thirds mark, it shifts back to Dennis’ first-person narration. I get why King did this; he wanted the perspective of the teenage character (which works exceedingly well) as well as some vivid descriptions of Christine killing some people. A little “having your cake and eating it, too,” and it only slightly doesn’t work.

That said, I liked it a lot more than I was expecting to.

Grade: B-

Pet Sematary (1983)

From what I recall, this was the last “first run” King novel I read (I had the hardcover) before college and moving on to snootier fare. Like most of the titles in this project thus far, it ended up being better than I recall, even if you can see where it’s going. According to a new introduction written in 2000, this is the one book that even scared its author, to the extent that he held off submitting it to his publisher thinking he had finally gone “too far.”

Plot, in a nutshell: a Midwest doctor, his wife, and two kids move to rural Maine, in a house alongside a busy state highway that is doom to pets. Behind their house is the local “pet sematary” where generations of spelling-challenged kids buried their pets, many of which had been claimed by speeding traffic on the road. (The set-up is virtually identical to King’s own situation when he got a teaching gig at the University of Maine.) However, just beyond the pet cemetery is an old Micmac burial ground that, like most Indian burial grounds, has some spooky local lore attached to it. When the family’s cat is run down on the road and killed, Louis (our hero) tests the local legend by burying it in the Indian burial ground...and the cat comes back, decidedly changed (the bits with the cat are the creepiest in the book). So, when Louis’s young son is killed on the road, he decides to see what would happen if...

The book references the classic short story

“The Monkey’s Paw,” of which it is a more modern iteration. The book is also a meditation on the extent to which “dead is better,” in that there is an inherent danger in just about everyone’s fantasy of being the deceased back rather than remembering them as they were in life. King succeeds where other horror writers fail in that it’s not always about the scare factor; there are larger themes in these books for those who care to look for them—although they’re usually pretty obvious.

The interesting thing about King is that he identifies his characters’ lapses in logic; we know that Louis is behaving irrationally when he digs up his son’s body and lugs it to the Indian graveyard;

he knows he is, as well, but he manages to rationalize it to himself...and to the reader. It actually kind of works. What I kind of throw a flag on is the generic use of anything Native American to have all sorts of supernatural effects. It was a convenient trope in old horror (for example,

Poltergeist), but seems a bit hokey these days.

I did not see the movie version, but did enjoy

The Ramones’ theme song. King was/is a big Ramones fan, and the refrain “Hey ho, let’s go” (from “Blitzkrieg Bop”) recurs throughout the book. At one point Louis checks into a motel under the name Dee Dee Ramone.

Grade: A-

Cycle of the Werewolf (1983)

I am not entirely certain what to make of this one. It’s basically a short story typeset so that it comes out to 120 pages, interspersed with color and black-and-white illustrations, some of which should be captioned “spoiler alert.” It is divided into 12 chapters (corresponding to the months of the year) and werewolf attacks that occur during each month’s full moon (King admits in an afterword that he played a but loose with the lunar cycle so as to have the full moon coincide with various holidays; like that’s the biggest problem...).

It’s an interesting narrative experiment but we never get all that invested in the town or the characters (as opposed to, say,

’Salem’s Lot) to really care all that much and when we find out who the werewolf is (given away in one of the illustrations, actually) we’re not all that surprised, since we had only met the character fleetingly.

This was apparently made into the movie

Silver Bullet (starring Corey Haim), which got decidedly

mixed reviews (perhaps explained by the phrase “starring Corey Haim”).

Anyway, a nice quick read but not too thrilling.

Grade: C

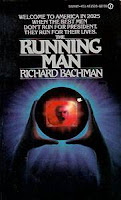

Up next is a collaboration with Peter Straub called The Talisman, which is a big, thick cube of a book, so that’ll take a while. Then there is the Richard Bachman weight-loss plan, another collection of short stories, and...It.